Zhi Tan

Assistant Professor

Northeastern University

zhi.tan at northeastern.edu

Research

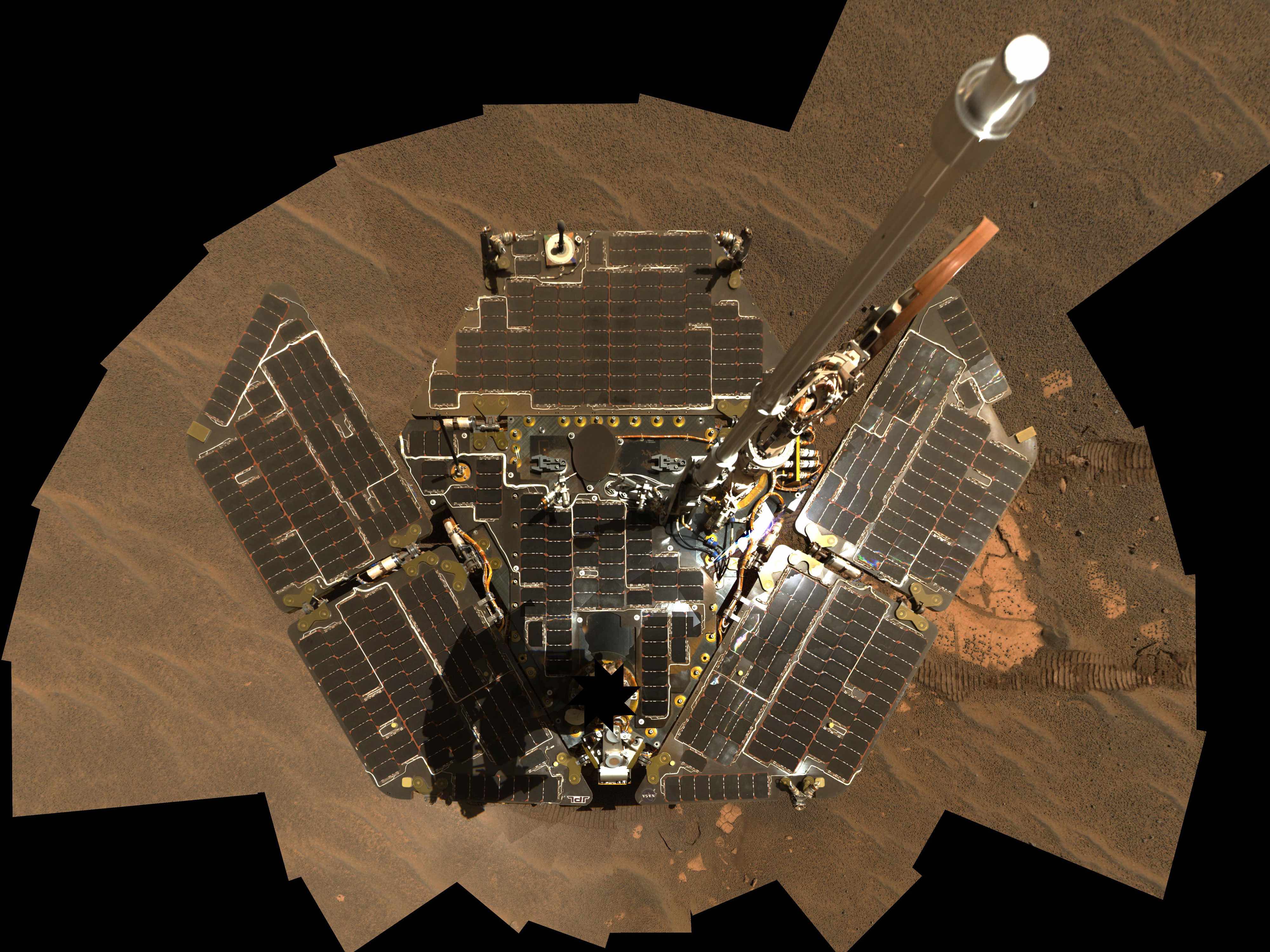

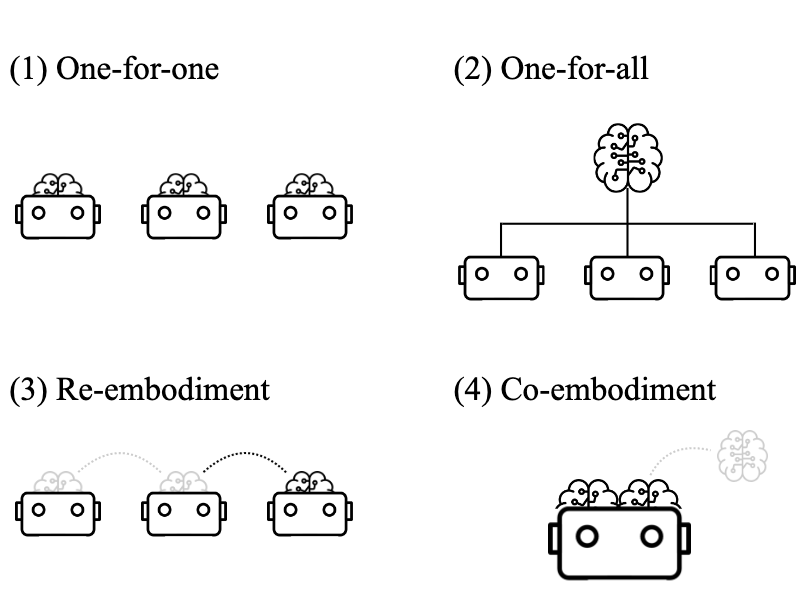

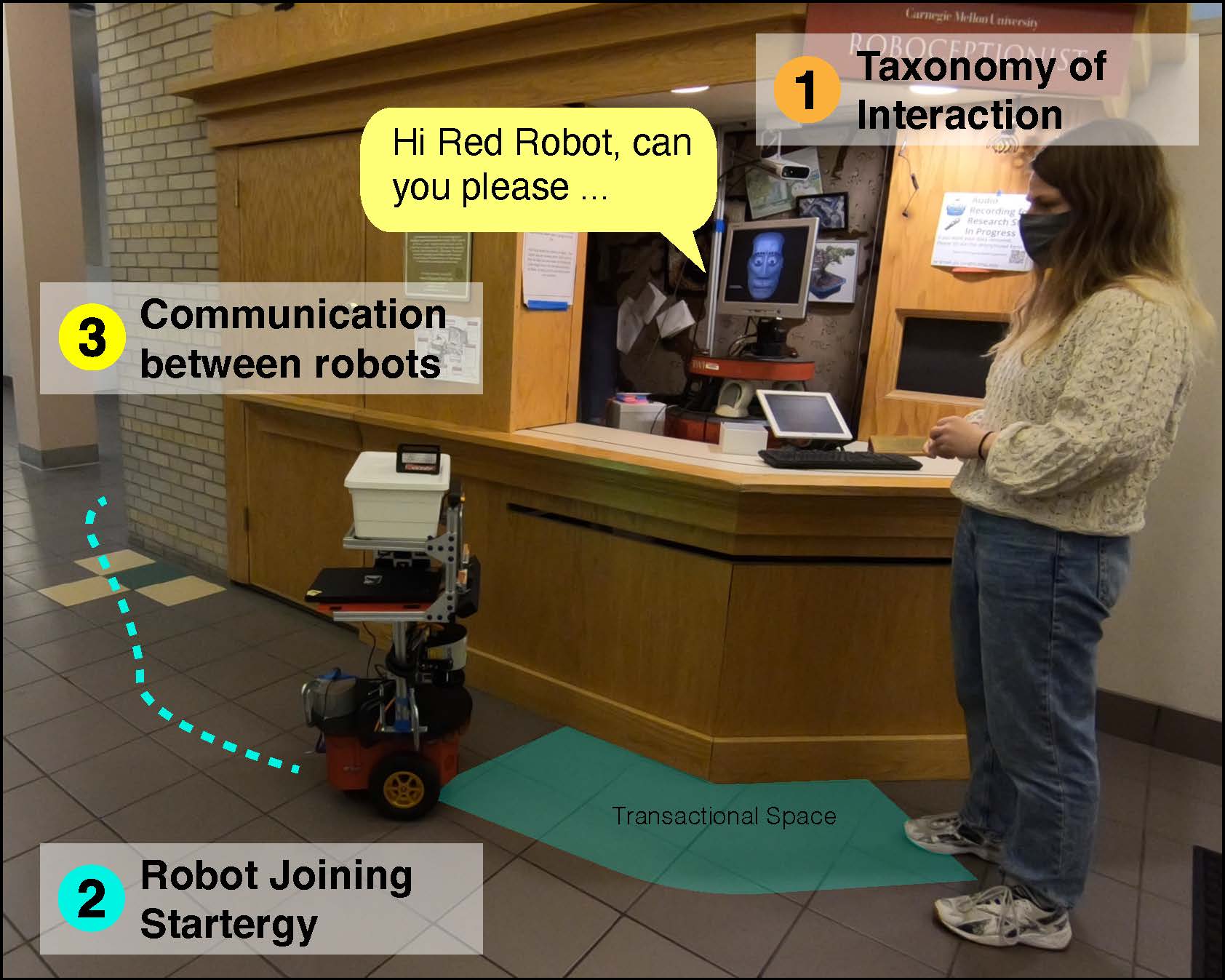

Sequential Human Interaction with Multiple-Robots

Our future likely involves humans interacting with multiple robots, whether because the first robot lacks the capability to complete the task, is more efficient, or the service requires the usage of multiple robots. In this line of work, we explored how to design sequential interaction with multiple robots. We created a taxonomy for this space [HRI'21], understood how robots should talk to each other [HRI'19], created strategies for robots joining an existing one-on-one interaction [ICMI'22], and deployed our system in the field [Thesis].

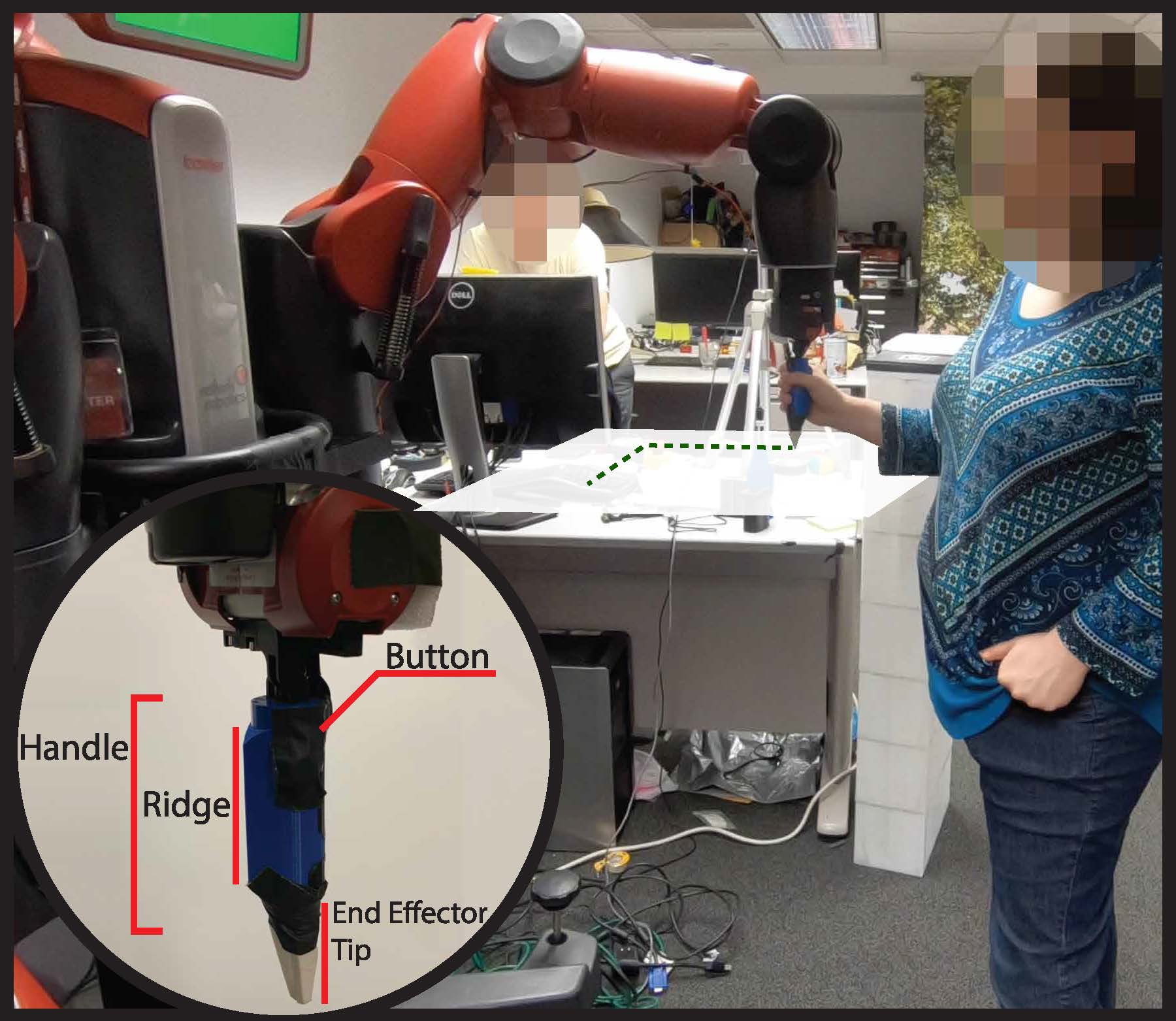

Assistive Navigation Robots in Complex Indoor Spaces

Indoor navigation in complex, unfamiliar spaces remains difficult for people with visual impairments. In this line of research, we explored how various types of robots could help people navigate indoor spaces. We explored and designed algorithms for mobile robots [IROS'19, ASSETS'21 LBR], stationary manipulators [ASSETS'19], and small spherical robots [RO-MAN'18].

Leveraging Other Intelligent Systems in Human-Robot Interaction

Future human-robot interactions are likely to take place in environments with other intelligent systems, such as screens and smart home sensors. In my past work, I demonstrated a HRI system that takes advantage of a co-located 50-inch touch screen and manages users' attention between the robot and the touch screen [HRI'20 LBR]. My on-going work explores how robots can leverage additional knowledge from smart home sensors to improve robots' efficiency.

Designing Longitudinal Assistive Robots

In collabroation with other researchers in AI-CARING, we are taking user-centric approach to design systems that tackle challenges faced by people with mild cognitive impairment living at home. We are currently (1) designing systems that provide safety instructions, (2) creating methods to summarize events for remote care partners, and (3) developing smart-home simulators to advance AI/ML research

Other Projects

Robots in Society